Security for AI agents

Intercept and control

AI agent activity

Viberails intercepts, audits and validates tool calls from OpenClaw and other agentic systems before execution. It's the guardrail between your AI and the world for individual developers and security teams.

why viberails

Say goodbye to ungoverned AI performing risky operations

And say hello to transparency, control, and accountability for your agentic operators

Inline Security

Configure which operations require approval, are AI-accessible, or remain manual.

Full Visibility

Inspect every tool call's parameters and responses. Query historical execution data.

Policy Enforcement

Write rules to block file deletions, restrict endpoints, or require human approval.

Complete Audit Trail

See execution logs showing which agents called which tools, when, and how.

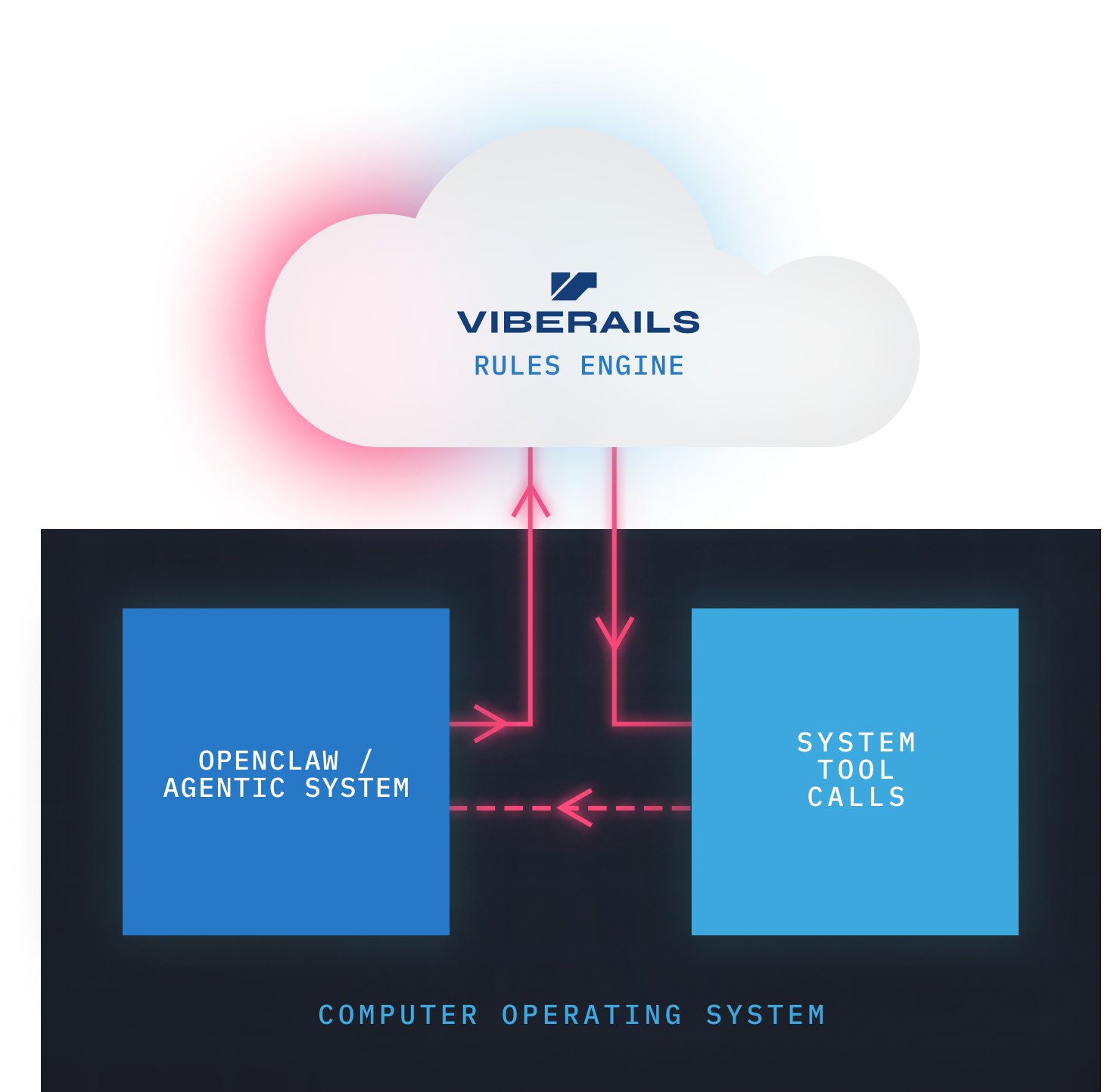

how it works

Security in the critical path

Viberails intercepts specified tool calls before they execute, giving you control over what your AI agents can do. With latency under 50ms, you get security without the slowdown.

Intercept

Sit in the execution path between agent and tools. No tool call reaches your infrastructure without passing through Viberails first.

Validate

Write policies as code to check file paths, verify API endpoints, or flag suspicious parameters before execution.

Control

Auto-approve safe operations, block dangerous ones, or route sensitive calls to human approval queues.

use cases

Built for agentic security

Secure any system where AI agents interact with tools, APIs, or infrastructure.

1

Claude Code & Coding Agents

Prevent unauthorized file access, command execution, and data exfiltration.

2

Autonomous Agents

Add guardrails to AutoGPT, BabyAGI, and other autonomous systems.

3

Enterprise AI Deployments

Implement organization-wide policies for AI tool usage.

4

MCP Server Security

Validate requests and enforce access controls on MCP servers.

Put agent execution under control

Intercept and govern every action before it reaches production.

free install